Home > UX Design > Flight Simulator Training Platform

About ESP (the Flight Simulator platform) (2008 - 2009)

ESP was a start-up group inside ACES studio and the ESP product grew out of the Flight Simulator platform: A training and simulation environment which modeled the world in great detail and allowed users to modify and expand it. The product was quite complex and consisted of a variety of tools and plug-ins for different users ranging from artists to flight trainers to developers.

My Role

As Senior UX Designer, I designed the end-to-end experience for a variety of user types (trainees, trainers, commanders, etc.), and needed to understand what had to happen on the development end to make it happen (in a 30-year-old code base).

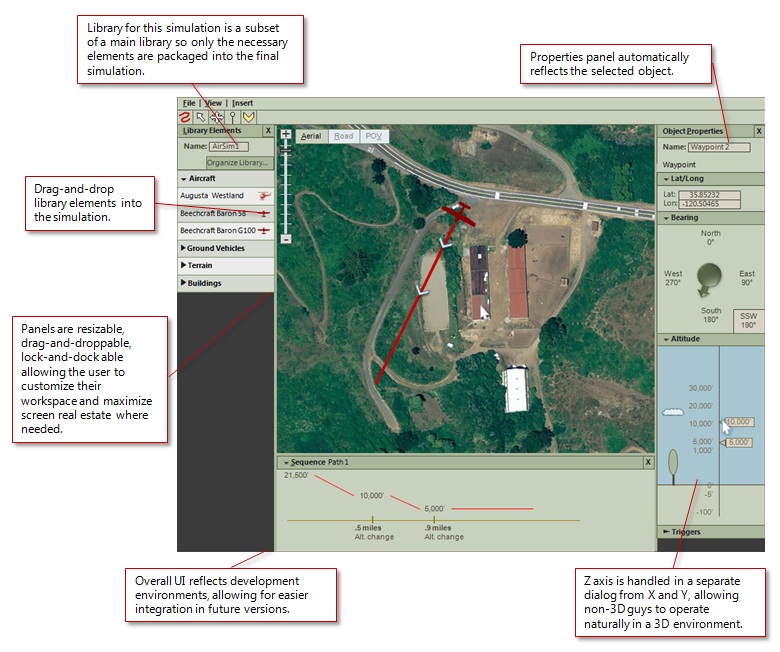

About the 3D Simulation Environment

The simulation environment (a.k.a. "Scenario Editor") was a way for people to create flight- and ground-based scenarios including both creating the environment and setting actions. The package included the world that you see in Flight Simulator and tools to modify that world by adding more detail to the terrain and creating paths and AI. For example, the user could select an area of the Nevada desert, place trees in specific locations, and add a military installation including all of the relevant buildings and roads. They could then add a variety of vehicles which would either follow a specific route or respond to the vehicles around them, and create a path that the trainee would need to follow. The product was geared toward training, so a typical scenario could be "Fly from point A to point B, lower the winch in the helicopter and pick up the supplies, then fly back to point A within a specified time frame."

The Audience

The audience for the Scenario Editor was not familiar with 3D environments; it was trainers and possibly commanders. The person who was creating the scenarios was an expert in knowing what kinds of situations a pilot or driver would need to respond to, not in creating 3D objects or navigating in 3D environments. The Scenario Editor therefore needed to reflect both their needs and their knowledge base.

The Simulator UX

Because the product revolved around the placement of objects in space (rather than the creation of those 3D objects) and because the audience was not familiar with working in typical 3D environments, my approach revolved around a map and instrument panels. Location (XY) and altitude (Z) would be addressed separately, as would bearing (direction) and pitch and yaw. The goal was to make the simulated world resemble the real world, where each of these is measured and/or recorded by different instruments.

Also, as our users might be working on laptops, I could not assume multimon (or even a mouse), so screen real estate was an issue. Therefore I created something that would work on a small screen, but would allow users to take advantage of larger screens by allowing them to move and resize the different panels.

Scenario Creation Walkthrough

The environment is designed to allow a trainer to design a simulation by using familiar commands and UI elements: All they would ever need to do is type, drag-and-drop, click, and right-click. For instance, to place an object in 3D space, they would simply need to select the object from a menu, drag and drop it to the desired location, and then select the altitude of that location in a different menu. They could select the POV view to see what the trainee would see, and modify the location, altitude, pitch and yaw, etc. from that perspective allowing them to control what the trainee would and would not see. The video below is a walkthrough of what this experience would be.

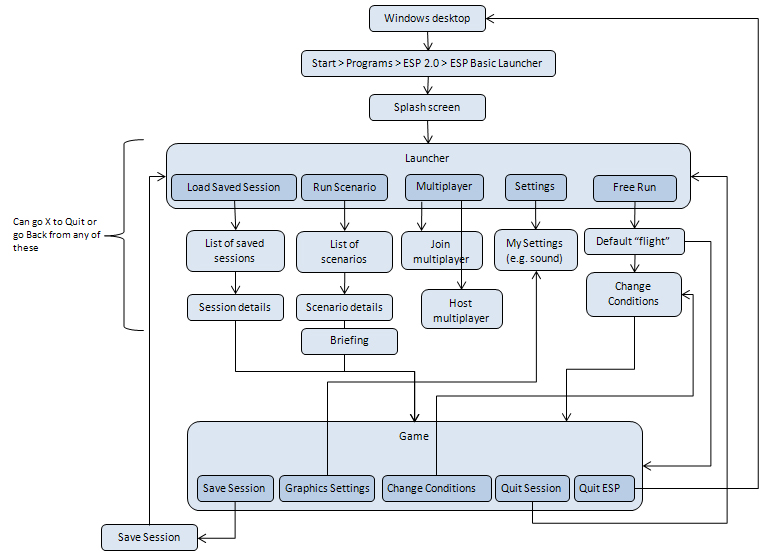

Launcher Flows

Goals: Create a way for users to run simulations out of the box. The product is a platform, so we expect them to create their own launch environment, but we need to provide a starting point. This needed to not only provide functionality, but also be skinnable (for rebranding) and modifiable for those customers who did not want to build their own complete environment.

I was responsible for the User Experience for the entire Launcher experience. One of the first things that I needed to do was to identify what we were building vs. what we were enabling, and part of my work for UX was to make sure that we were enabling the correct things even if we were not building them.

A simplified flow of the Launcher as a whole looks something like this:

The "Change Conditions" box is actually an entire complex flow in itself.

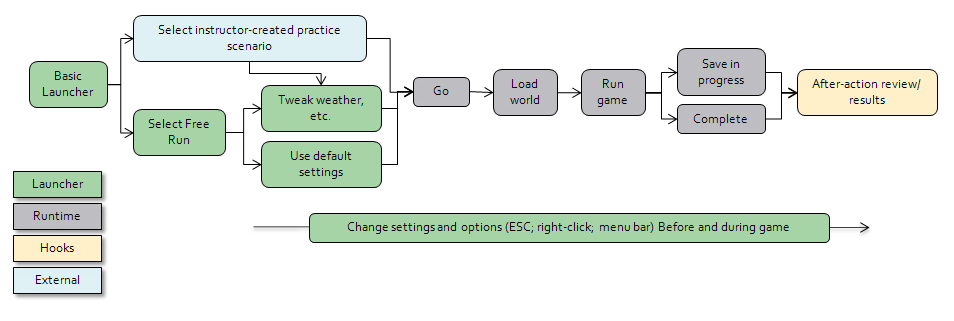

It's also important to note that within the course of a single user task (e.g. "Practice flying this vehicle") the user flow crosses several applications, some of which would be in-the-box, some we would be providing hooks for, and some would be created externally, but displayed within the Launcher:

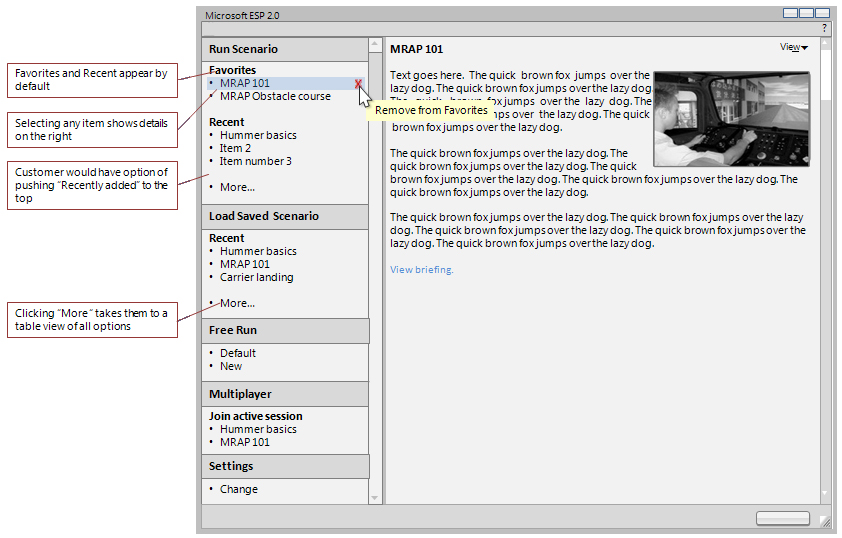

I also needed to differentiate between what a solution provider/developer could do with the launcher vs. what an end user was expected to do. For example, while an end user could add or remove items from their Favorites or rearrange the columns in a table, only a developer could change the menus or reskin the application.

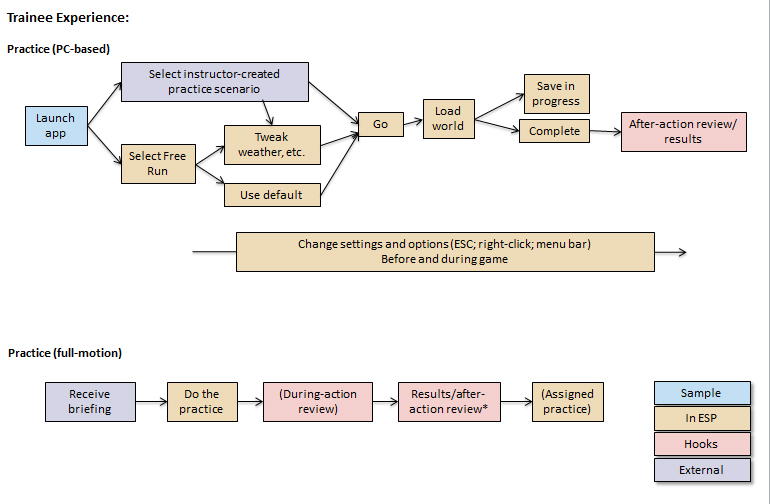

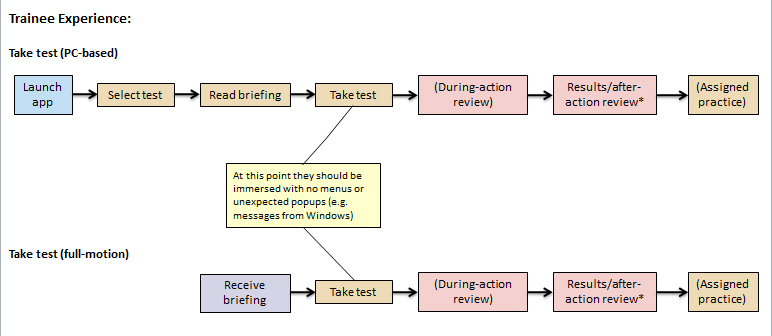

Because the training process varied depending on whether you were in practice mode or taking a test (namely if you got to change the settings) and whether you were using a full-motion simulator or a laptop, I did simple flow diagrams to show those differences:

Launcher UI

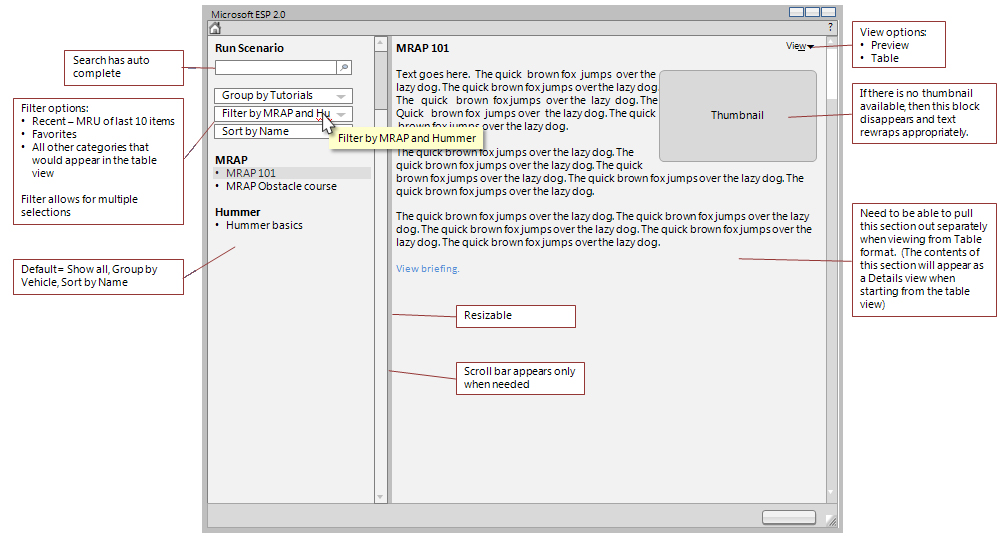

Because our first customers were going to be military, the UI needed to NOT look like a video game. Because of the variety of tasks that the user does from within the Launcher, the fact that solution providers will be able to reskin it and modify the menus, and the fact that any new scenarios which they create will appear in the list of scenarios along with the ones in the box, one of the challenges was coming up with a design that was flexible enough to allow for extension and modification and yet would provide consistency throughout the out-of-box experience. I went through several iterations, the final one (before the product was cut) was to have a Preview Mode and a Table Mode.

Preview Mode

Goals:

- User can quickly graze through options and see details about them

- User can Filter and sort the available options

- User can quickly access Favorites and Recent

- User can search for specific items

- Flexibility in the Preview Pane: There may be no thumbnail and the amount of text in the Preview Pane may vary greatly

- Flexibility in the List Pane: Customers may add or remove items and create their own categories

The default view when you start the Launcher would be a modified version of Preview Mode set to show Favorites and Recent items:

Clicking on any item would show details in the right side, and clicking "More" would take the user to a table view showing all available options. This transition is the part of the user experience that I would most want to test through dynamic prototypes and usability.

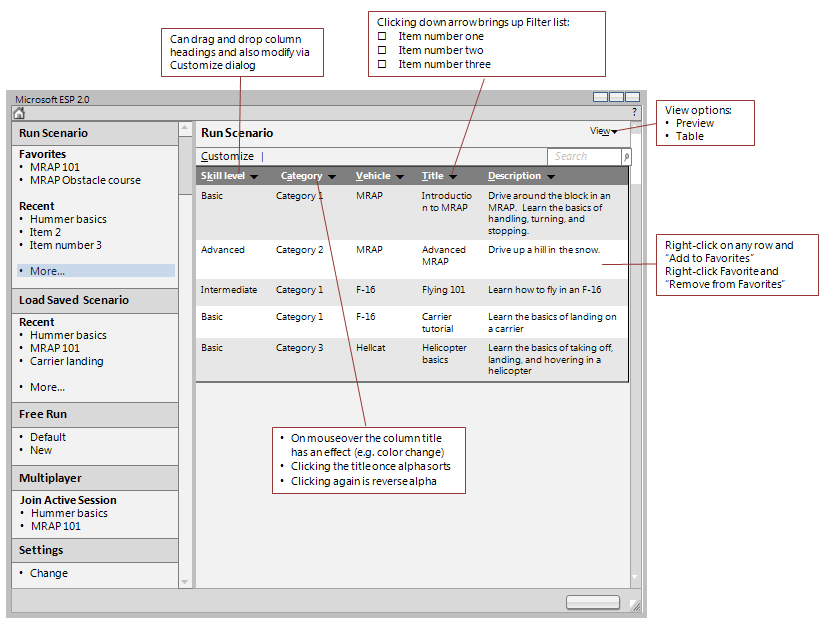

Table Mode:

Goals:

- One-click access to everything (not elegant, just quick)

- User can Filter and sort the available options

- Flexibility: Customers can add and remove scenarios and categories

Free Run Conditions

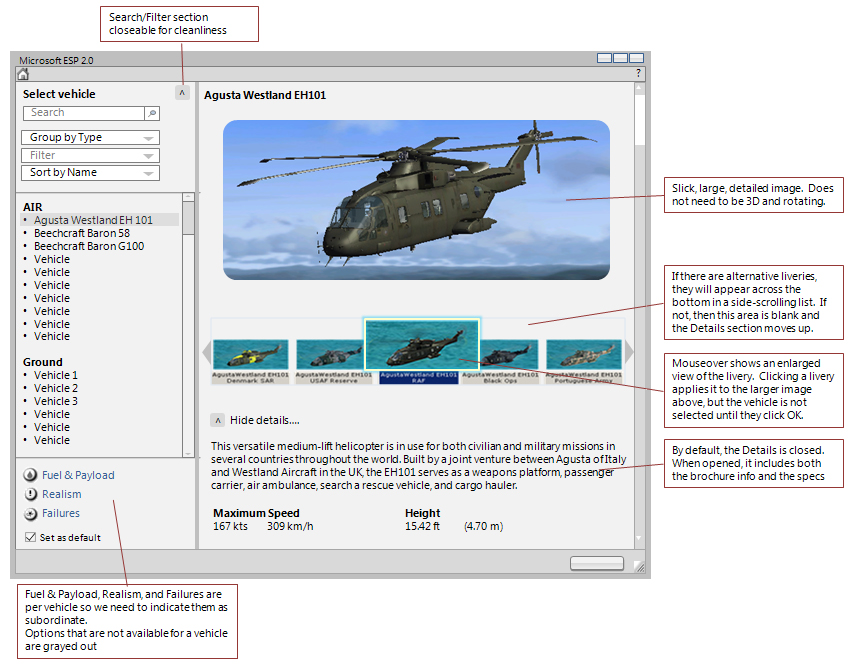

Challenges: These are essentially the Free Flight conditions that you see in Flight Simulator, with the added complexity that the same dialogs are not only shared with Flight Simulator, but are also used in three places within ESP: The Basic Launcher, the runtime, and the Scenario Editor. The design, therefore needed to be flexible enough to allow for use in each of those environments. Some of the flows within each of the sections was also quite complicated. The options available to the user to set include: Vehicle, Fuel & Payload, Realism, Realism, Failures, Location, Time & Season, and Weather. Within each of these there are a variety of settings. Below is the initial Select Vehicle screen, since this is one of the more intertwined sections (not only does it have several levels of its own, but it is also shared across ESP and Flight Simulator):

It is a complex screen, and I think perhaps a little busy, but all of the elements are required. I tried to make the left side less busy by making the Sort/Filter options collapsible (this would also provide more room vertically for a longer list). This is another section that I would want to build a dynamic prototype for so I could get a better feel for working with it.

I was quite sad when the product was cancelled, since not only was it a fun, fascinating, and complex piece of software, but it was also a great group of people.